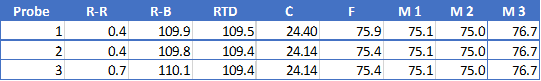

A few RTD users have reported variation in their temp readings comparing probes against a certified/known good probe or each other. This should not be the case. RTD’s should deliver both high accuracy and repeatability - that’s the point of using them.

Here is a recommendation to identify the potential source of the discrepancy (amplifiers or the probes), assuming you have the RTD amp set up correctly (wiring, jumpers, pad resistor).

1. Apply a jumper across the amplifier wire-probe terminals and a known 100 ohm resistor across the probe-probe terminals. That will simulate a probe with no resistance in the wires and 100 ohms at the probe. A Pt100 probe should output 100 ohms at 0 deg Celsius, so with this resistor, you should get a reading of 0 in BC just from the RTD calibration alone.

2. Put a probe into a medium along with a certified/know-good temperature probe. Give them time to settle and note the temperature on the certified probe. Using an quality ohmmeter, measure the wire-probe resistance and the probe-probe resistance. Subtract the former from the latter to get the actual probe resistance. Check that resistance against a Pt100 resistance chart to see how close it is. The properties of these probes assuming they are of proper construction with platinum should yield an exact resistance which matches the chart. As above, if the medium is at exactly 0 degrees (quality ice water), the resistance should be 100 ohms.

Here is a link to a RTD chart:

https://www.sterlingsensors.co.uk/pt100-resistance-table

Here is a link to an online calculator as well:

https://www.peaksensors.co.uk/resources/rtd-calculator-temperature-resistance/

![Craft A Brew - Safale S-04 Dry Yeast - Fermentis - English Ale Dry Yeast - For English and American Ales and Hard Apple Ciders - Ingredients for Home Brewing - Beer Making Supplies - [1 Pack]](https://m.media-amazon.com/images/I/41fVGNh6JfL._SL500_.jpg)